- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Resourcefully accelerating a company’s time-to-market is the goal of any process optimization measure, especially when optimizing with Virtual Commissioning tools. However, such a resourcefulness is only achieved sustainably with a consistent collection and analysis of meaningful data from the components involved in a machine process. This meaningful data can be processed and interpreted live through Edge Computing technologies.

Edge Computing makes it possible to gain useful insights from the available data, not only into the very heart of any machine, but its entire circulatory system of data streams.

The Industrial Internet of Things (IIoT) is characterized by a large number of interacting participants, decentralized decision-making and thus the distributed processing of (real-time) data.

Using Virtual Commissioning tools allows testing both hardware and software for these complex use cases of the IIoT environment – even before a real plant is built. Furthermore, Virtual Commissioning can realistically provide the extensive and heterogeneous data exchange with either physical hardware in the loop or from simulated hardware models.

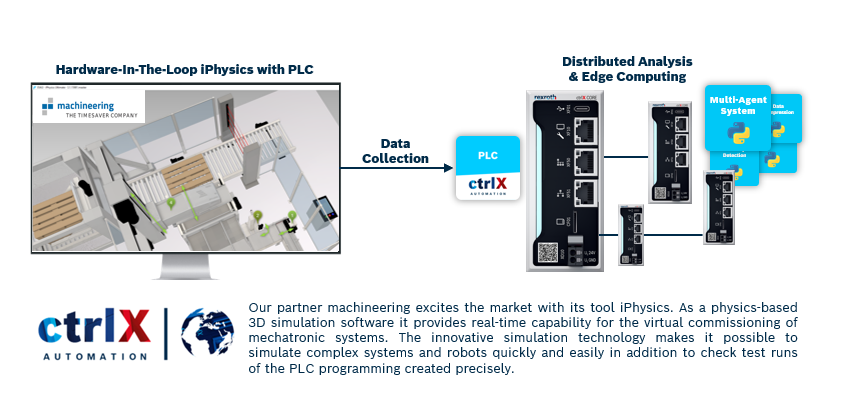

This article shows how the open interconnectivity of the ctrlX AUTOMATION ecosystem provides solutions for the virtual commissioning of complex systems with edge computing applications on ctrlX OS for a distributed analysis of data. The data to be analyzed by the apps to be virtually commissioned are generated by the simulation software iPhysics.

With the resourcefulness of ctrlX AUTOMATION and from it built solution, we can enable and improve any data collection process and, further, of Edge Computing or even Machine Learning applications.

The built solution has proven to:

- Collect real-time data even earlier in the product life cycle through the connection of Hardware-In-The-Loop simulation data from iPhysics. Additional data can also be collected that might not otherwise be even available from the real asset.

- Enable early optimization of computation parameters (hyperparameters) to improve the quality of the executed edge computing.

- Enable joint testing of software applications and hardware, especially when building complex system solutions with ctrlX AUTOMATION and legacy, proprietary components.

A deep dive into setup and use cases

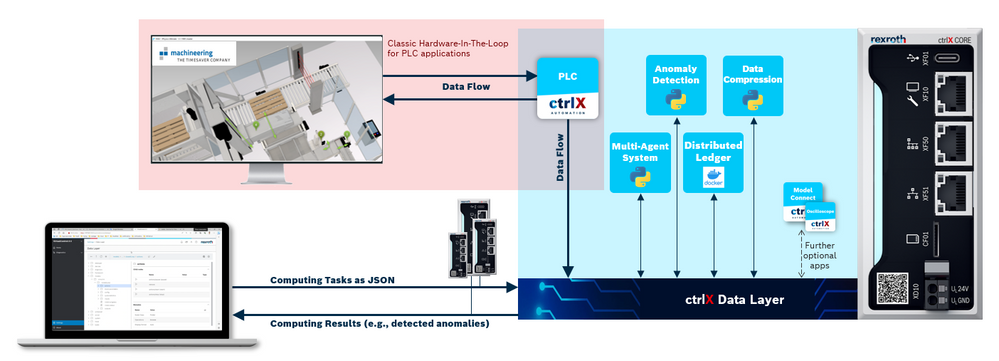

Using the ctrlX OS SDK, we can write our own ctrlX OS apps to enable various edge computing use cases to be performed on a ctrlX CORE. Referring to the following picture, each use case is tackled with its own ctrlX OS app which are connected to each other and the simulation data in iPhysics through the ctrlX Data Layer. The ctrlX OS PLC app holds a classic connection to iPhysics to provide the hardware-in-the-loop simulation data to the ctrlX Data Layer.

We wrote ctrlX OS apps for the following use cases (and more!):

- Distributed Computing: Middleware-like systems for Distributed Stream Processing Systems (DSPS) which links the physical layer consisting of physical devices and data (streams) with the applications for data stream processing are of high complexity as they interact with and depend on both infrastructure and logic (edge computing applications).

- Multi-Agent Reinforcement Learning for allocation of resources: Reinforcement learning agents running as an app on ctrlX decide how to allocate computational and communication resources for processing streaming data depending on the available resources.

- Data Compression to reduce the raw data and retain the information: Reducing the amount of data by compressing streaming data, e.g., sensor signals. Lossless compression is used to reduce redundancies while preserving all the information. Lossy compression is applied for the reduction of irrelevances, i.e., only relevant information is retained.

- Anomaly Detection for extraction of information: Kernel density estimation, a non-parametric statistical method, is used for detection of different kinds of anomalies (point anomaly, drifts, collective, and contextual anomalies) in streaming data, e.g., sensor signals, during runtime.

- Distributed Ledger Technology (DLT) and Smart Contracts for protection of information: By building a DLT network, using e.g., the DLT IOTA, the immutable recording of data and the use of smart contracts becomes possible. The IOTA nodes can be operated as an app on ctrlX end devices.

In this setup with several ctrlX OS apps the ctrlX Data Layer on the ctrlX CORE acts as a data sink to provide any collected data from the simulation tool, here machineering iPhysics, to edge computing applications. The data sink is filled with information from the simulation tool and its connected or simulated machine components.

In this presented case, the ctrlX OS PLC Apps are the active collectors of Hardware-In-The-Loop data and potentially also fully simulated data from iPhysics. However, other apps such as the ctrlX OS Device Bridge App could also feed collected data from directly connected machines into the Data Layer for further usage.

Edge computing architectures with ctrlX OS

In general, ctrlX OS offers several options to combine and configure existing infrastructure with new infrastructure and components, to fulfill various use cases in a resourceful and sustainable manner. In any of our edge computing use cases, for instance, every collecting or computing ctrlX CORE can be seen as a ctrlX OS instance. With ctrlX OS, a pure software instance runs the ctrlX OS software stack on independent, perhaps already existing, hardware, such as many common server architectures.

The following graphic shows a feasible architecture:

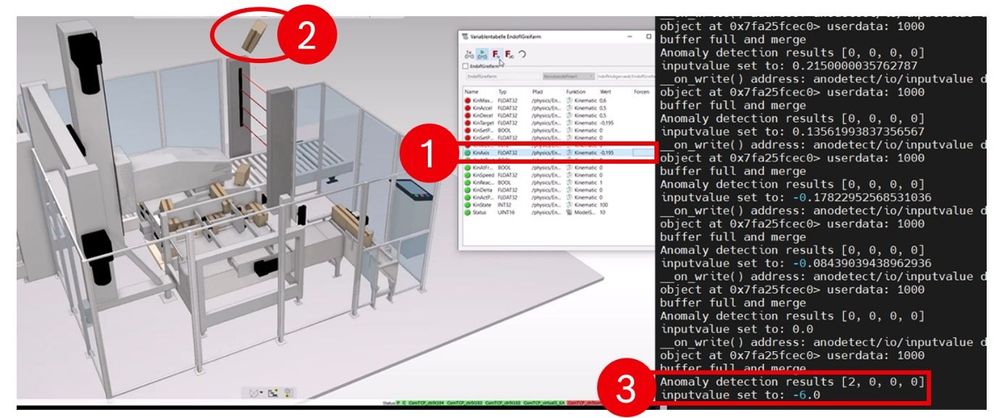

An example use case: Anomaly Detection

An interesting example use case is the detection of anomalies in a diverse, complex system. In such a case, we could use an adaptive, unsupervised machine learning algorithm. In our example use case we chose the non-parametric statistical method Kernel Density Estimation (KDE). This KDE algorithm was adapted to detect different kinds of anomalies within streaming data, i.e., sensor signals, and is suitable for running on edge devices like the ctrlX CORE.

- It detects point anomalies via their probability of occurrence.

- A drift detection is realized by tracking the long-term movement of the kernels.

- A detection of collective anomalies is implemented for cyclic data and can successfully detect anomalies within production cycles.

- The contextual anomalies are detected via their probability of occurrence in a multidimensional space.

A running simulation model that generates the sensor signals to be monitored can be coupled with the anomaly detection algorithm running as an app on ctrlX CORE. Faulty values can be manually triggered in the simulation environment, allowing the anomaly detection algorithm to be tested in a realistic environment before deployment in the real plant.

Ultimately, we were able to trigger a fault (1) and detect the resulting anomaly during the virtual commissioning of the machine. In the following picture the flying work piece can be seen in the visualization of iPhysics (2), but also the detected anomaly in the computation with the real-time data (3).

The Achievements – In Short

Once again, the open ecosystem of ctrlX AUTOMATION has shown its diverse approaches to today’s challenges. We were able to tackle new complex use cases around virtual commissioning by combining hardware data and software data through the ctrlX Data Layer on ctrlX OS. This time, we enabled real-time insights not only into the heart of a machine to commission, but its entire vascular system using Edge Computing approaches in distributed systems of the IIoT.

Any required algorithms were capsuled in ctrlX OS apps and brought together with the powerful virtual commissioning tool iPhysics, all through the ctrlX Data Layer on ctrlX OS. Combining hardware data and software data we created real-time insights into machine processes and optimized behavior early in the product life cycle with hardware-in-the-loop simulation. With machine learning and edge computing algorithms the optimal behavior of a machine was achieved easer, more efficient, and less risky.

What’s Next?

Discover and contact our ctrlX World Partner machineering and their iPhysics software.

Read up on our latest technologies and services in Virtual Engineering.

Read and download our published journal articles:

- Rosenberger, Julia; Dr. Andreas Selig; Dr. Mirjana Ristic u. a. (2023).

"Virtual Commissioning of Distributed Systems in the Industrial Internet of Things"

In: Sensors 23.7. - Rosenberger, Julia; et al. (2022).

"Deep Reinforcement Learning Multi-Agent System for Resource Allocation in Industrial Internet of Th...In: Sensors 22.11. - Rosenberger, Julia; et al. (2021).

"Extended kernel density estimation for anomaly detection in streaming data“

In: Procedia CIRP 112. 15th CIRP Conference on Intelligent Computation in Manufacturing Engineering, 14-16 July 2021, S. 156–161.

Go deeper into machine learning with our article How to run Machine-Learning Models on ctrlX CORE.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.